A year ago, my colleague Dr. Seddik Belkoura presented some challenges that a Data Analyst could possibly face in a Data Mining pipeline. The aim of this post is to expand on this idea that interpreting and analysing data are not trivial tasks. Because of this, it is not uncommon for Data Analysts to take shortcuts when managing and interpreting Data, often trying to tailor the datasets and the results into their preconceived notions of how things are. These are some of the most common data fallacies today:

More data mining pitfalls: top 5 data fallacies

Dario Martinez

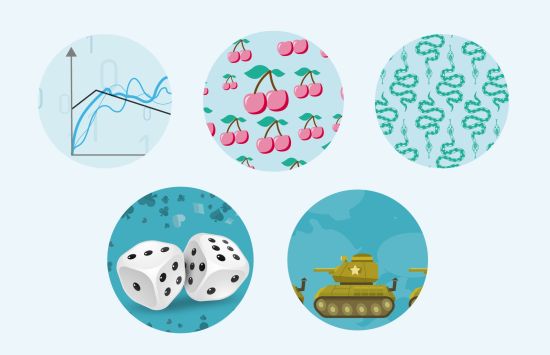

1. Overfitting and cross-validated data

When looking at data, analysts want to understand what the underlying relationships are. But sometimes models are built that are overly tailored to the train dataset and, as a result, not representative of the general trend. This is called overfitting and might be the most well-known “fallacy” in Data Science. Some Data Analyst don’t even realise that the phrase “applying cross-validation prevents overfitting” is also a fallacy.

Indeed, cross validation does not prevent overfitting – it helps in the assessment of how much methods overfit. And this of course implies that model validation and detection of overfitting can not be resolved simultaneously in a single model.

2. The art of “cherry picking”

Also known as the fallacy of incomplete evidence, cherry picking is selecting results to fit a specific claim and excluding those that don’t. This is a common practice often seen in public debates and politics where two sides can both present data that back their position. “Cherry picking”, wether intentional or accidental, is one of the most prominent mistakes made when presenting data-based facts. This practice is not only dishonest and misleading to the public, but also reduces the credibility of experimental findings and the techniques used for it.

Another common well known problem, closely related with the “cherry picking” technique, is the publication fallacy. It states that interesting research findings are more likely to be published, distorting our impression of reality and influencing the development and conclusion of some data-based researchs.

3. The cobra effect

Also known as the “perverse incentive“, is the act of setting an incentive that accidentally produces the opposite result to the one intended. It is said that the term comes from an infamous program created by the British government to reduce cobras population in India. The British announced that they would pay anyone who brings them a dead cobra. Indians took up cobra breedering to profit from the program. The government discovered these farms and ended the buyback program. This caused the closure of the breeders, resulting in the liberation of all the cobras. In the end, this translated to a huge increase in the total population of cobras, the opposite of what the British government wanted.

What is this story’s relation to Data Science? Well, is not uncommon for data-based apps to fail because of this problem. One good example of this is applications featuring recommendation engines built on the purchase history. These kind of recommenders are normally fed with the customers’ positive feedback, causing permanent changes in consumer behaviours and market structure over time. These recommendation engines and structures encourage monopolies of brands and raise the barrier of entry of new products or better substitutes. This ultimately discourages customers from trying new new things, seen in a lack of alternative feedback to train the recommender system. Therefore, the system recommends the same brands and products over and over again, instead of suggesting new ones (which is the intended functionality of a recommender system).

4. The gambler’s fallacy and the regression fallacy

The gambler’s fallacy is the act of mistakenly believing that, because something recently has happened more frequently, it is now less likely to happen (and vice-versa). The key points for understanding this fallacy are the law of large numbers and its relation with regression towards the mean as applied to big datasets. The law of large numbers states that the mean of the results of performing the same experiment a large number of times should be close to the expected value, and will tend to become closer as more trials are performed. But this law only applies to a large number of observations, so there is no principle that stipulates that a small number of observations will coincide with an expected value. Note then, the necessity of checking the volume of the dataset when analysing to determine if a fallacy is inducted or not.

But the concept of the regression towards the mean also introduces the regression fallacy. This assumes that if something happens that’s unusually good or bad, it will revert to average over time. But the reality is that statistical regression towards the mean is not a causal phenomenon. This fallacy is often used to “find” a causal explanation for outliers generated by a process in which natural fluctuations exists.

5. The McNamara fallacy

This fallacy, also know as the quantitative fallacy, is named after Robert McNamara, former Secretary of Defense of USA, and refers to McNamara’s belief during the Vietnam War that enemy body counts are a precise and objective measure of success. The fallacy involves making a decision based solely on quantitative observations or metrics, and ignoring all others. The reason given is often that these other observations cannot be proven.

Apart from being a good example of blind numeric reasoning gone wrong, this example presents three common problems in Data Analysis. First, measuring every feature that can be easily measured, a common practice in descriptive analysis. Second, disregarding that which cannot be measured easily and presuming it is not important. And last, presuming that which cannot be measured easily does not exist.