It is clear data is growing indefinitely. The installation to handle data has become more powerful. Trends around data science solutions are strong, just considering the impressive number of startups concentrating on data science, machine learning, AI algorithm, or other data-related fields. Overall, the current conjecture is favourable to data science solutions, and companies in every domain, including aviation, are leveraging the increasing amount of machine learning techniques and their democratisation to build models that capture the underlying behavior of the data in hopes of transforming it into a working application. The options are limitless, but unless you are a startup specialising in a very specific and precise problem, large companies and complex structures such as aviation must address diverse data with a wide challenges. The question that immediately rises is: Is it possible to solve all operational problems using data science?

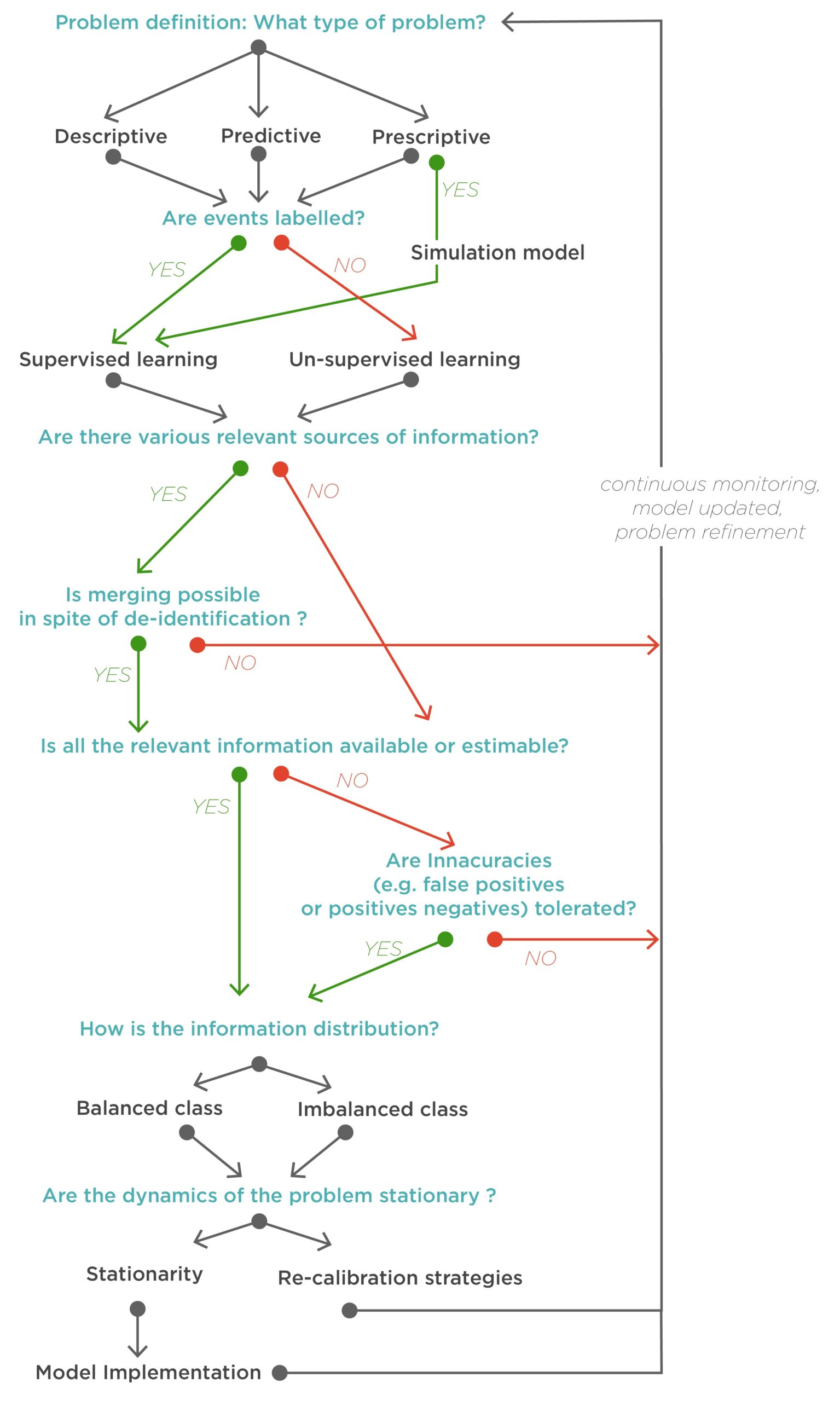

Unfortunately, the answer is no. Or, at the very least, not efficiently. Many parameters must be favourable for any model to result in a working application. In this blog post, we would like to propose an evaluation strategy to assess the risk of failure/success (depending on the how optimistic the reader is today) of a particular data science project. This idea was inspired by Amazon’s “working backwards” approach, according to Ian McAllister, a general manager at Amazon. The concept is simple: “We work backwards from the customer, rather than starting with an idea for a product and trying to bolt customers onto it.” Which means that for any new initiative or idea, an internal press release announces how the finished product will be, explaining how it is “centered around the customer problem, how current solutions (internal or external) fail, and how the new product will blow away existing solutions”, states McAllister. The company then iterates the press release until obtaining a satisfying results. Why? Because iterating a press release is a lot quicker and less expensive than iterating on the product itself. This rationale is also applicable to aviation and data science. It is much better to think ahead about the data science risks/success than to test every option at incredible costs in resources.