Certification of AI systems by 2025 – utopia or reality? 1/2

Paula Lopez

For more than three years, we have regularly brought you initiatives and developments oriented towards achieving higher levels of automation in the aviation sector through the implementation of data-driven and Artificial Intelligence techniques. Though there has been some progress in the field, at least in the number of initiatives, none of them have been truly integrated into operations.

While there is no doubt to the benefit of these cost-efficient solutions and how can they help in different applications, from forensic analysis to decision support tools based on ML predictions, there are still some barriers for final implementation and commercialization of systems and tools. One of those is the certification. In parallel to the increasing number of AI solutions, various national and European authorities have launched different initiatives to evolve current certification processes into an “AI-friendly” certification procedure that ensures safety in AI-based products.

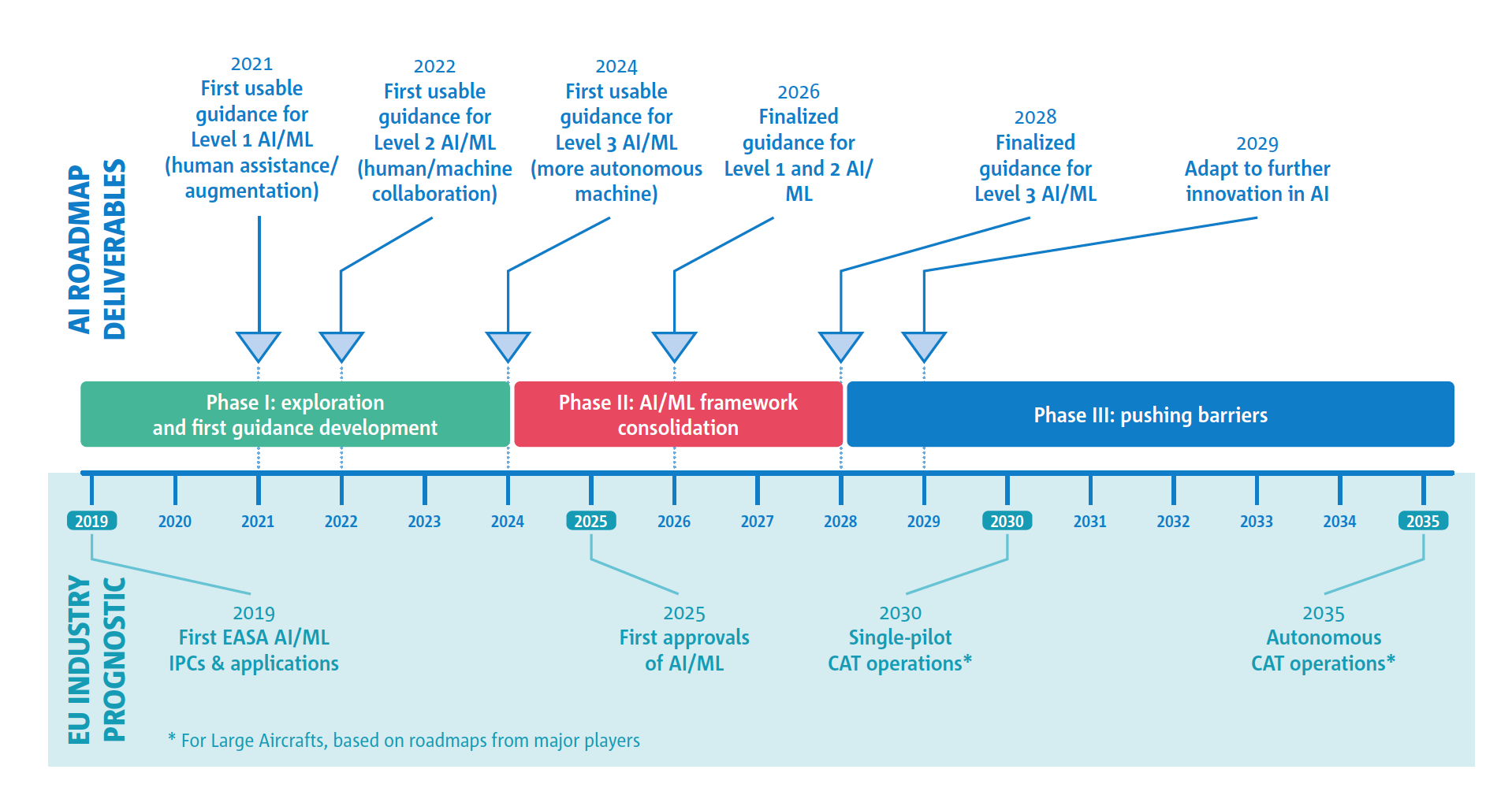

In 2020, EASA published the Artificial Intelligence Roadmap: a human-centric approach to AI in aviation aimed at enabling the first certification of AI in aircraft systems for 2025. The introduction of these techniques into our sector will not only impact certification but also other processes, like rule-making, organisation approvals or standardisation and, as a consequence, the competency framework of Agency staff. At the same time, the potential ramifications on liability, ethics, and society need to be considered in line with the European approach to AI fostered by the AI HLEG (https://ec.europa.eu/digital-single-market/en/high-level-expert-group-artificial-intelligence). In spite of the challenging nature of these necessary changes, they at least demonstrate the Agency’s willingness to support industry demand.

While the Roadmap is still a very high-level document, some ideas are worth consideration for the different aviation applications foreseen:

- Aircraft design and operations, where computer vision and NLP techniques are expected to be applied to pilot assistance and decision-support, especially in non-critical tasks. While full autonomous flight is considered, it is expected to be pushed by the drones market at a later stage.

- Aircraft production and maintenance: the increased level of digitalization produces a huge number of data sets, opening opportunities for AI application in both aircraft manufacturing and predictive maintenance, one of the more advanced areas where predictive capabilities are developed in the aviation sector.

- In Air Traffic Management, different automation solutions are being developed at research level to assist ATCOs in repetitive/non-safety critical tasks.

- Drones and U-space includes urban air mobility as a potential business case. The particularities of this sector require non-traditional approaches to be developed and pave the way for implementing AI/ML solutions at different levels, such as detect and avoid, autonomous localization.

- Safety Risk Management, where AI can increase safety by detecting emerging risks, classifying occurrences and prioritising safety issues, as demonstrated in the SafeClouds project and EASA’s Data4Safety.

- Aviation’s environmental impact can be reduced by using AI solutions that enable trajectory optimization to reduce noise and pollutants emission.

The growing application of these techniques, enabled by higher levels of digitalization, is also expected to bring new cybersecurity threats. The implementation of these techniques may be impossible with the necessary EU regulations in place to evaluate the safety of the AI/ML solutions. While some of these regulations are valid, additional means of compliance, adaptations and standards need to be developed to enable the EASA roadmap to be put in place. In terms of timeline, EASA expects to first certify an AI solution by 2025. This first solution is a pilot-assistance tool, in line with the industry roadmaps. After 2025, an incremental path has been proposed to reach full autonomy by 2035. This timeline will evolve from crew assistance (2022-2025) to human-machine collaboration (2025-2030) until the final step of autonomous commercial air transport by 2035. To guide this progress, EASA will prepare and publish the corresponding deliverables following this roadmap.

Source: Artificial Intelligence Roadmap, 2020, EASA

In its roadmap, EASA highlights that “the trustworthiness concept will necessarily be a key enabler of the societal acceptance of AI” and dedicates a large part of its report to this aspect and the challenges linked to it. Most of the challenges presented are linked to the probabilistic nature of the ML applications, its associated uncertainty, variance and bias. On another hand, other challenges are discussed related to the need of developing new validation, verification, and evaluation methods for ML applications and its robustness, especially considering its adaptive learning capabilities. In a following publication, we will discuss the trustworthiness analysis in more detail.

The publication of this first installment of a yearly AI Roadmap is a big step in making AI in aviation more of a reality.

Ref:

https://www.easa.europa.eu/sites/default/files/dfu/EASA-AI-Roadmap-v1.0.pdf