From batch processing to streaming processing in Aviation

Dario Martinez

Batch vs Streaming processing

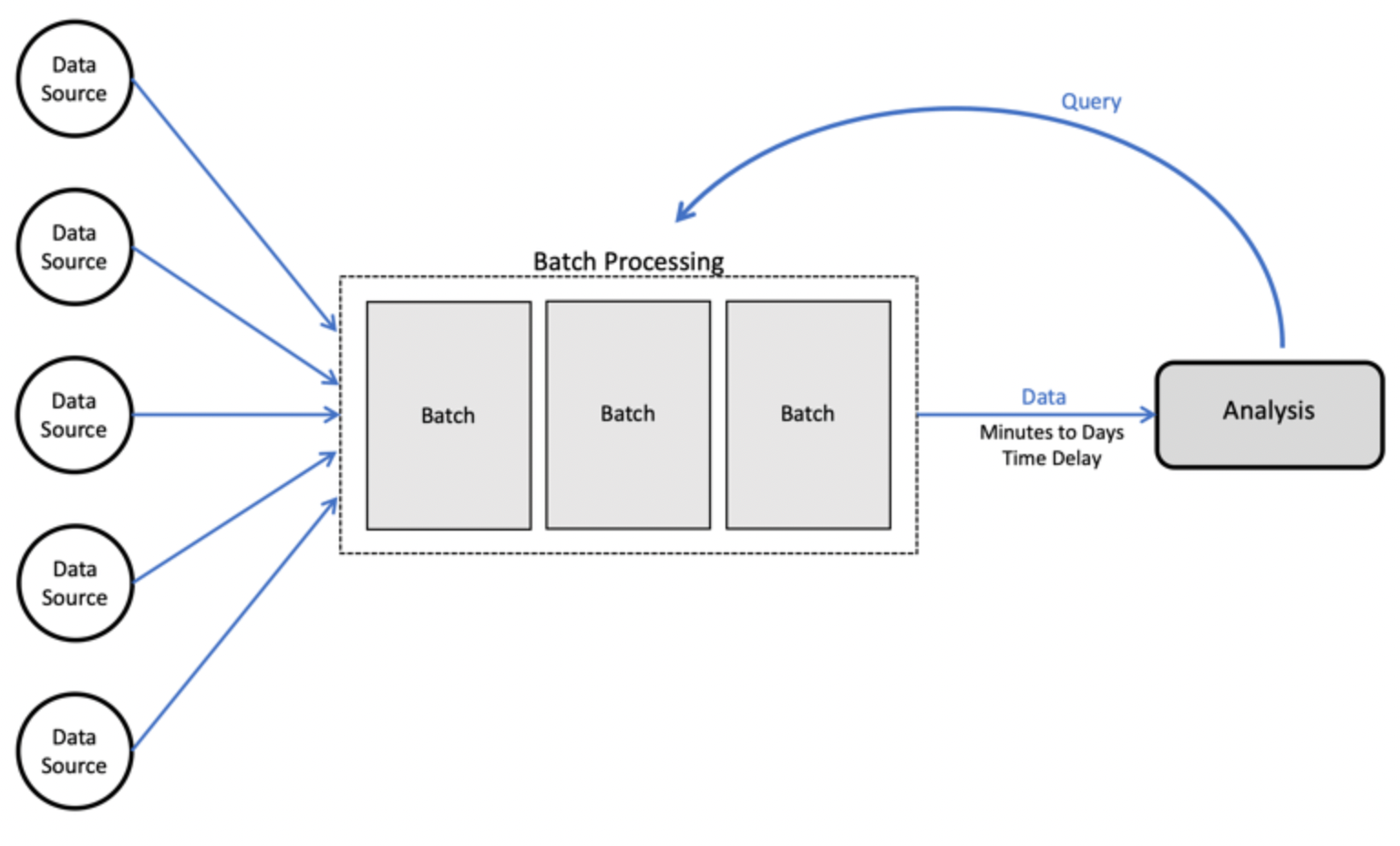

In traditional data pipelines, analysing today’s events would mean waiting until tomorrow night for more jobs to finish. This is often referred to as batch processing. In batch processing, we wait for a certain amount of raw data to “pile up” before running an ETL job. Typically, this means data collects for anywhere from an hour to a few days before analysis. Batch ETL jobs will typically be run on a set schedule (e.g. every 24 hours), or in some cases, once the amount of data reaches a certain threshold.

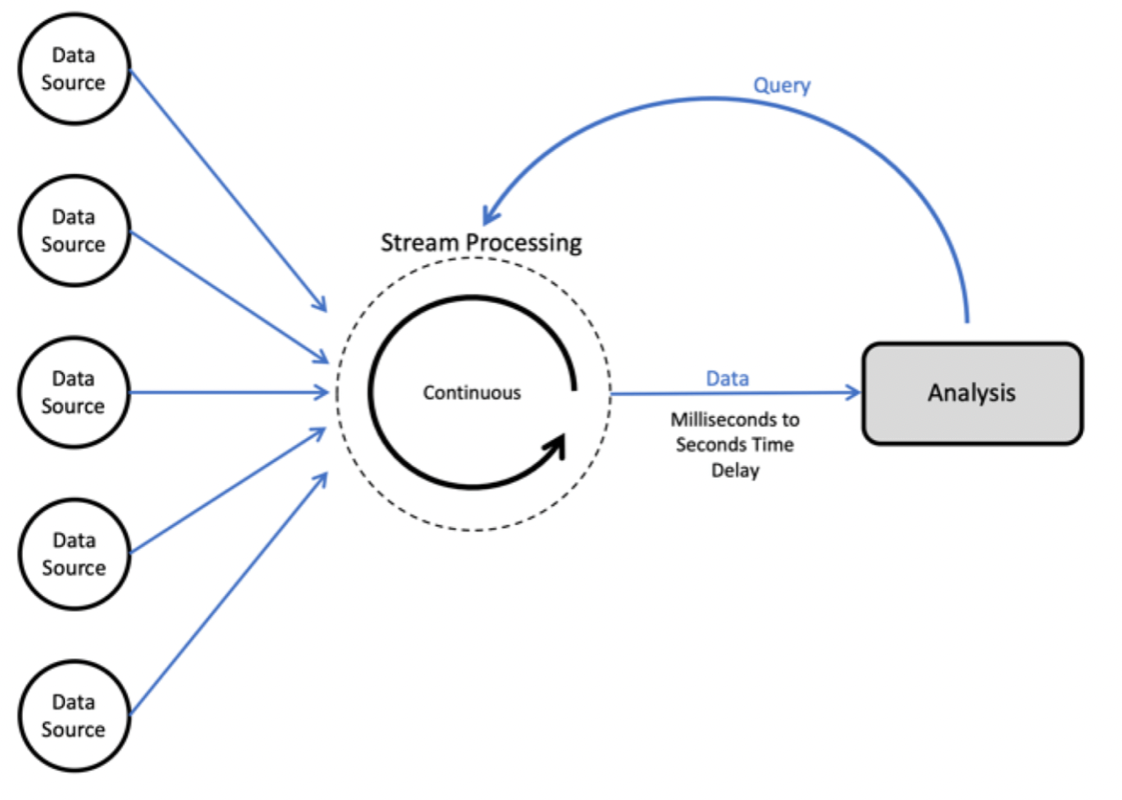

In many industries (including the aviation industry), data becomes available in a matter of seconds (e.g. aircraft positions), minutes (e.g. meteorological information) or hours (e.g. flight plans and regulations data). In such scenarios, real-time stream processing is a paradigm worth leveraging. Data is processed as soon as it arrives at the data platform, or in sub-second timeframes. For the end-user, this processing is near enough to resemble real-time. These operations would only store a “small” state, and as such would involve relatively simple transformations or calculations. Real-time stream processing technologies are undoubtedly powerful. However, they introduce a vast amount of complexity into infrastructure and data pipelines. Tooling, data format and overall complexity of the requisites of real-time processing systems are a challenge for any data engineer.

The real-time streaming stack and methodology

When look into technologies that enable real-time processing, the usual frameworks come to mind: Apache Kafka, Flink, Spark Streaming… For those particular libraries, you need to take the following requirements into account:

- continuously ingesting and processing reasonably large amounts of real-time data

- anticipating multiple producers and consumers and decoupling their communication

- having total control over the underlying infrastructure

While many companies and services attempt to facilitate the management of underlying distributed clusters, the architecture still remains fairly complex. Therefore, you also need to consider:

- whether you have the resources to operate those clusters

- how much data you plan to process with this platform

- whether the added complexity is worth the effort

What are real-time streaming processing use cases outside the aviation industry?

Following are some of the use cases.

- Algorithmic Trading, Stock Market Surveillance

- Smart Patient Care

- Monitoring a production line

- Supply chain optimizations

- Intrusion, Surveillance and Fraud Detection (e.g. Uber)

- Most Smart Device Applications: Smart Car, Smart Home

- Smart Grid — (e.g. load prediction and outlier plug detection see Smart grids, 4 Billion events, throughout in range of 100Ks)

- Traffic Monitoring, Geofencing, Vehicle, and Wildlife tracking — e.g. TFL London Transport Management System

- Sports analytics — Augment Sports with real-time analytics (e.g. such as this analysis over a real football game, Overlaying realtime analytics on Football Broadcasts)

- Context-aware promotions and advertising

- Computer system and network monitoring

- Predictive Maintenance, (e.g. Machine Learning Techniques for Predictive Maintenance)

- Geospatial data processing

What about the aviation industry?

In the aviation industry, there are many data sources that enable real-time streaming case studies:

- Automatic Dependent Surveillance-Broadcast (ADS-B) data is usually served as a stream of aircraft position data. Many companies such as FlightAware provide APIs that enable immediate real-time streaming analytics. Analysing these streaming at real-time speed is a paradigm that not many companies have yet looked into. Some common aviation case studies could be extrapolated to real-time, such as trajectories prediction and sector complexity forecasting.

- Flight Data Monitoring (FDM) has gradually increased in use across aviation. It’s a natural progression from the black boxes of the past, which provided a way for aircraft operators to investigate accidents. As an investigative tool, though, black boxes were often complex and technical. Despite advances in data science and the effort of some companies to provide analytics over FDM, the wealth of valuable insights that this data source can provide remains to be seen. With the adoption the real-time streaming processing, airlines and manufacturers could use FDM data analysis to its full potential, enabling case studies involving real-time recommendations and predictive alert systems, such as detecting unstable approaches and flight anomalies in real-time.

- Airport data-driven cases are quite challenging given the number and variety of different data sources and different data providers (airline ticketing, baggage transportation, passenger movements, retail, weather, e.g.). Furthermore, some of these providers provide data in legacy interfaces that raise the complexity of the case study even higher. However, with the correct stack of technologies and sufficient expertise in data-driven real-time case studies, any challenge is doable.

Do you have any challenging case studies that may involve streaming processing? Are you looking to level up your data analysis capabilities by implementing real-time data pipelines? If you are interested in collaborating with us at DataBeacon, contact us!